Machine Learning for Computational Linguistics

Classifjcation Çağrı Çöltekin

University of Tübingen Seminar für Sprachwissenschaft

May 3, 2016

Practical matters Classifjcation Logistic Regression More than two classes

Practical issues

▶ Homework 1: try to program it without help from specialized

libraries (like NLTK)

▶ Time to think about projects. A short proposal towards the

end of May.

Ç. Çöltekin, SfS / University of Tübingen May 3, 2016 1 / 23 Practical matters Classifjcation Logistic Regression More than two classes

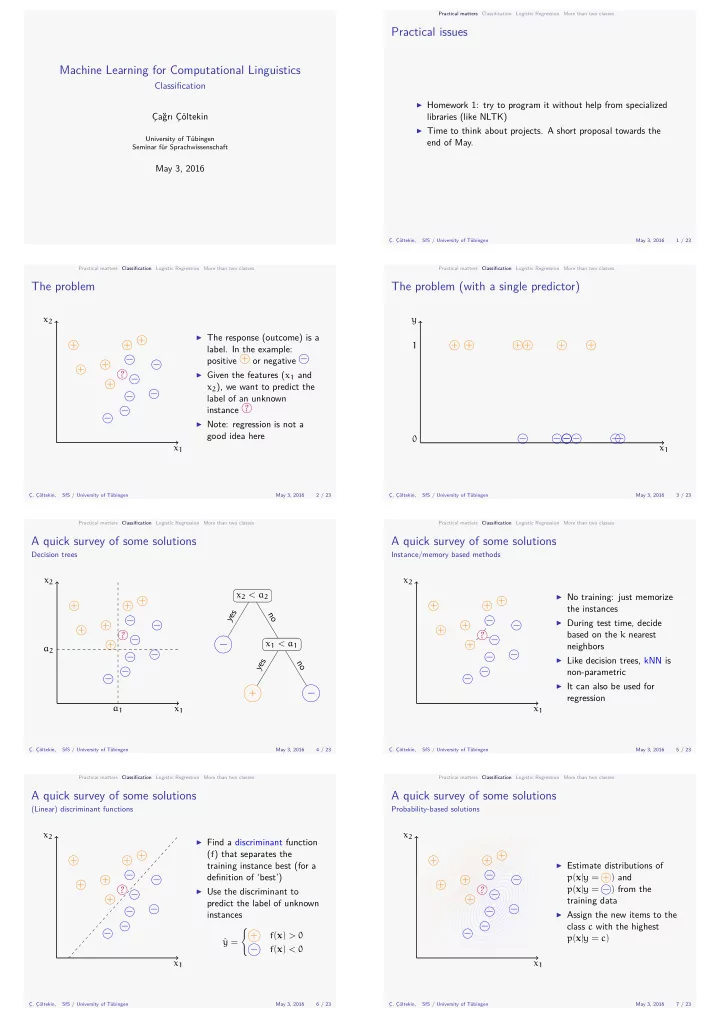

The problem

x1 x2 + + + + + + − − − − − − − ?

▶ The response (outcome) is a

- label. In the example:

positive + or negative −

▶ Given the features (x1 and

x2), we want to predict the label of an unknown instance ?

▶ Note: regression is not a

good idea here

Ç. Çöltekin, SfS / University of Tübingen May 3, 2016 2 / 23 Practical matters Classifjcation Logistic Regression More than two classes

The problem (with a single predictor)

1 y x1 + + + + + + − − − − − −

Ç. Çöltekin, SfS / University of Tübingen May 3, 2016 3 / 23 Practical matters Classifjcation Logistic Regression More than two classes

A quick survey of some solutions

Decision trees

x1 x2 + + + + + + − − − − − − − ? a1 a2 x2 < a2

−

x1 < a1

+ −

yes no no yes

Ç. Çöltekin, SfS / University of Tübingen May 3, 2016 4 / 23 Practical matters Classifjcation Logistic Regression More than two classes

A quick survey of some solutions

Instance/memory based methods

x1 x2 + + + + + + − − − − − − − ?

▶ No training: just memorize

the instances

▶ During test time, decide

based on the k nearest neighbors

▶ Like decision trees, kNN is

non-parametric

▶ It can also be used for

regression

Ç. Çöltekin, SfS / University of Tübingen May 3, 2016 5 / 23 Practical matters Classifjcation Logistic Regression More than two classes

A quick survey of some solutions

(Linear) discriminant functions

x1 x2 + + + + + + − − − − − − − ?

▶ Find a discriminant function

(f) that separates the training instance best (for a defjnition of ‘best’)

▶ Use the discriminant to

predict the label of unknown instances ˆ y = { + f(x) > 0 − f(x) < 0

Ç. Çöltekin, SfS / University of Tübingen May 3, 2016 6 / 23 Practical matters Classifjcation Logistic Regression More than two classes

A quick survey of some solutions

Probability-based solutions

x1 x2 + + + + + + − − − − − − − ?

▶ Estimate distributions of

p(x|y = +) and p(x|y = −) from the training data

▶ Assign the new items to the

class c with the highest p(x|y = c)

Ç. Çöltekin, SfS / University of Tübingen May 3, 2016 7 / 23