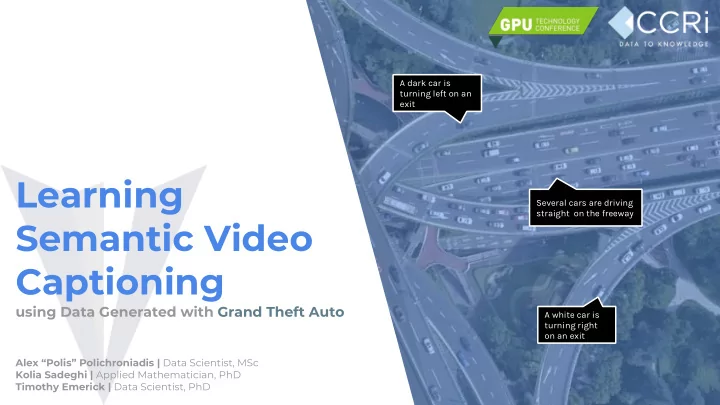

Several cars are driving straight on the freeway A white car is turning right

- n an exit

A dark car is turning left on an exit

Learning Semantic Video Captioning

using Data Generated with Grand Theft Auto

Alex “Polis” Polichroniadis | Data Scientist, MSc Kolia Sadeghi | Applied Mathematician, PhD Timothy Emerick | Data Scientist, PhD