1

the

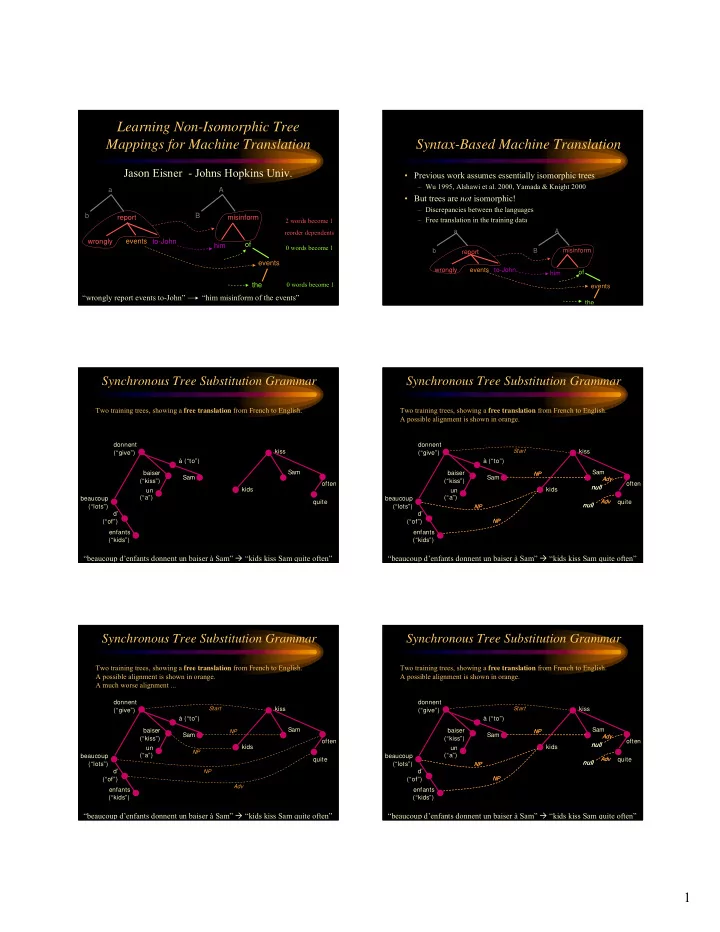

Learning Non-Isomorphic Tree Mappings for Machine Translation

Jason Eisner - Johns Hopkins Univ.

a b A B events

- f

misinform wrongly report to-John events him

“wrongly report events to-John” “him misinform of the events”

2 words become 1 reorder dependents 0 words become 1 0 words become 1

Syntax-Based Machine Translation

- Previous work assumes essentially isomorphic trees

– Wu 1995, Alshawi et al. 2000, Yamada & Knight 2000

- But trees are not isomorphic!

– Discrepancies between the languages – Free translation in the training data

the a b A B events

- f

misinform wrongly report to-John events him

Two training trees, showing a free translation from French to English.

Synchronous Tree Substitution Grammar

enfants (“kids”) d’ (“of”) beaucoup (“lots”) Sam donnent (“give”) baiser (“kiss”) un (“a”) à (“to”) kids Sam kiss quite

- ften

“beaucoup d’enfants donnent un baiser à Sam” “kids kiss Sam quite often”

enfants (“kids”) kids

NP

d’ (“of”) beaucoup (“lots”)

NP NP

Sam Sam

NP

Synchronous Tree Substitution Grammar

kiss donnent (“give”) baiser (“kiss”) un (“a”) à (“to”)

Start NP NP

null

Adv

quite null

Adv

- ften

null

Adv

null

Adv

“beaucoup d’enfants donnent un baiser à Sam” “kids kiss Sam quite often”

Two training trees, showing a free translation from French to English. A possible alignment is shown in orange.

enfants (“kids”) kids

Adv

d’ (“of”) beaucoup (“lots”)

NP

Sam Sam

NP

Synchronous Tree Substitution Grammar

kiss donnent (“give”) baiser (“kiss”) un (“a”) à (“to”)

Start NP

quite

- ften

“beaucoup d’enfants donnent un baiser à Sam” “kids kiss Sam quite often”

Two training trees, showing a free translation from French to English. A possible alignment is shown in orange. A much worse alignment ...

enfants (“kids”) kids

NP

d’ (“of”) beaucoup (“lots”)

NP NP

Sam Sam

NP

Synchronous Tree Substitution Grammar

kiss donnent (“give”) baiser (“kiss”) un (“a”) à (“to”)

Start NP NP

null

Adv

quite null

Adv

- ften

null

Adv

null

Adv

“beaucoup d’enfants donnent un baiser à Sam” “kids kiss Sam quite often”

Two training trees, showing a free translation from French to English. A possible alignment is shown in orange.