SLIDE 1

MEAN SQUARED ERROR

MSE = 1

n n

- i=1

(y(i) − ˆ

y(i))2 ∈ [0; ∞)

→ L2 loss.

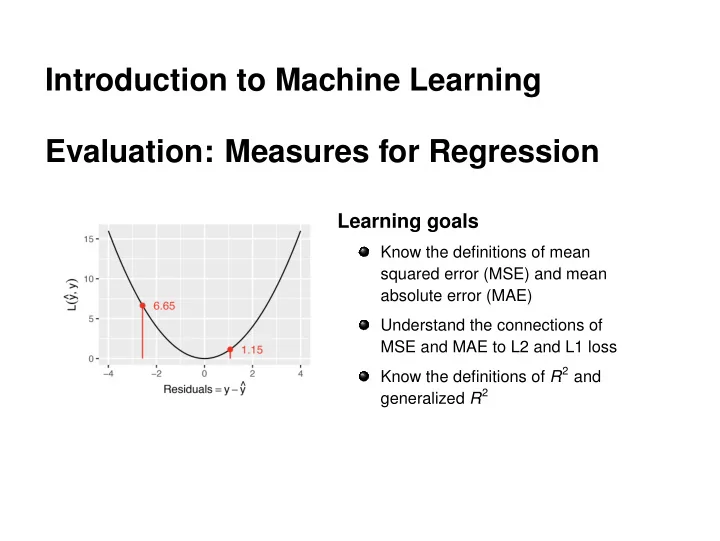

Single observations with a large prediction error heavily influence the MSE, as they enter quadratically.

6.65 1.15

1 2 3 4 5 6 7 2 4

x y 6.65 1.15

5 10 15 −4 −2 2 4

Residuals = y − y ^ L(y ^, y)

Similar measures: sum of squared errors (SSE), root mean squared error (RMSE, brings measurement back to the original scale of the

- utcome).

c

- Introduction to Machine Learning – 1 / 4