Introduction to Deep Learning

1 / 24

Introduction to Deep Learning 1 / 24 Is it a question? Given - - PowerPoint PPT Presentation

Introduction to Deep Learning 1 / 24 Is it a question? Given training data with categories A ( ) and B ( ), say well drilling sites with different outcomes 2 / 24 Is it a question? Given training data with categories A ( ) and B (

1 / 24

2 / 24

2 / 24

3 / 24

3 / 24

◮ face recognition ◮ optical character recognition ◮ speech recognition ◮ object recognition ◮ playing the game Go – in fact, defeated human champions 3 / 24

◮ face recognition ◮ optical character recognition ◮ speech recognition ◮ object recognition ◮ playing the game Go – in fact, defeated human champions

3 / 24

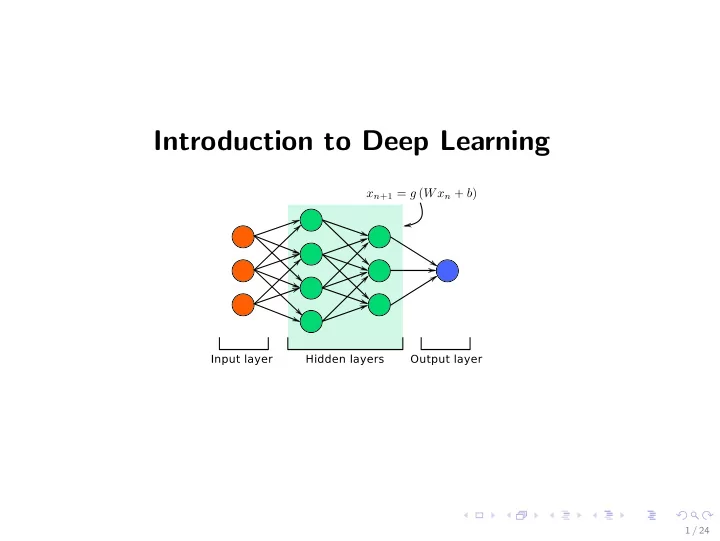

◮ Deep Learning = multilayered Artificial Neural Network (ANN).

4 / 24

◮ Deep Learning = multilayered Artificial Neural Network (ANN). ◮ A simple ANN with four layers

4 / 24

◮ An ANN in a mathematically term

5 / 24

◮ An ANN in a mathematically term

◮ An ANN in a mathematically term

◮ p := {(W [2], b[2]), (W [3], b[3]), (W [4], b[4])} are parameters to be

◮ σ(·) is an activiation function, say sigmoid function

5 / 24

◮ The objective of training is to “minimize” a properly defined cost

p Cost(p) ≡ 1

m

2,

6 / 24

◮ The objective of training is to “minimize” a properly defined cost

p Cost(p) ≡ 1

m

2,

◮ Steepest/gradient descent

6 / 24

◮ The objective of training is to “minimize” a properly defined cost

p Cost(p) ≡ 1

m

2,

◮ Steepest/gradient descent

6 / 24

7 / 24

7 / 24

8 / 24

8 / 24

9 / 24

10 / 24

10 / 24

11 / 24

11 / 24

12 / 24

13 / 24

13 / 24

14 / 24

14 / 24

15 / 24

15 / 24

16 / 24

16 / 24

17 / 24

17 / 24

18 / 24

18 / 24

19 / 24

19 / 24

20 / 24

21 / 24

21 / 24

22 / 24

22 / 24

23 / 24

24 / 24

◮ large labeled datasets; ◮ improved hardware; ◮ clever parameter constraints; ◮ advancements in optimization algorithms; ◮ more open sharing of stable, reliable code leveraging the latest in

24 / 24

◮ large labeled datasets; ◮ improved hardware; ◮ clever parameter constraints; ◮ advancements in optimization algorithms; ◮ more open sharing of stable, reliable code leveraging the latest in

24 / 24

◮ large labeled datasets; ◮ improved hardware; ◮ clever parameter constraints; ◮ advancements in optimization algorithms; ◮ more open sharing of stable, reliable code leveraging the latest in

◮ learning to model and parameterization ◮ capable of self-enhancement ◮ generic computation architecture ◮ executable on local HPC and on cloud ◮ broadly applicable but requires good understanding of the underlying

24 / 24