‹#›

Graph-based Clustering

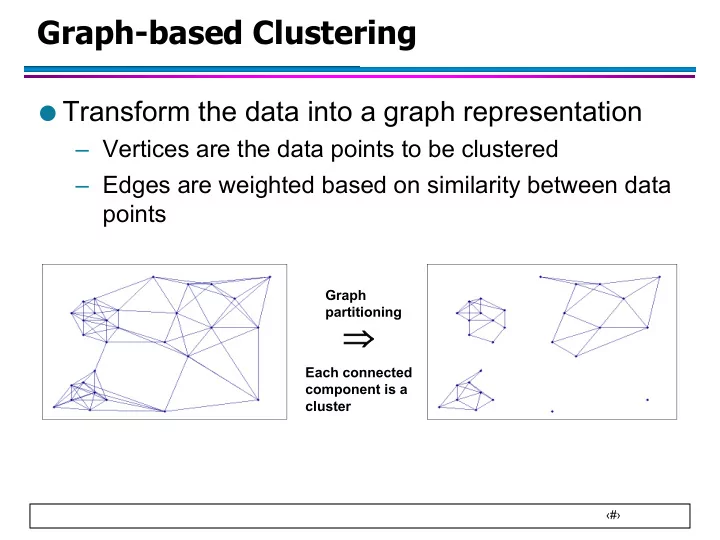

- Transform the data into a graph representation

– Vertices are the data points to be clustered – Edges are weighted based on similarity between data points

Þ

Graph partitioning Each connected component is a cluster

Graph-based Clustering Transform the data into a graph - - PowerPoint PPT Presentation

Graph-based Clustering Transform the data into a graph representation Vertices are the data points to be clustered Edges are weighted based on similarity between data points Graph partitioning Each connected component is a

‹#›

Graph partitioning Each connected component is a cluster

‹#›

‹#›

Î Î

2 1,

2 1

V j V i ij

0.1 0.3 0.1 0.1

0.2 0.1 0.3 0.1 0.1

0.2 Cut = 0.2 Cut = 0.4

wij is weight of the edge between nodes i and j

‹#›

Cut = 0.1 0.1 0.3 0.1 0.1

0.2

‹#›

Î Î j ij i V j j V i i

2 1

2 1 2 1 2 1 2 2 1 1 2 1 2 1 V1 and V2 are the set of nodes in partitions 1 and 2 |Vi| is the number of nodes in partition Vi

‹#›

Cut = 0.1 Ratio cut = 0.1/1 + 0.1/5 = 0.12 Normalized cut = 0.1/0.1 + 0.1/1.5 = 1.07 Cut = 0.2 Ratio cut = 0.2/3 + 0.2/3 = 0.13 Normalized cut = 0.2/1 + 0.2/0.6 = 0.53 0.1 0.3 0.1 0.1

0.2 0.1 0.3 0.1 0.1

0.2

‹#›

Cut = 1 Ratio cut = 1/1 + 1/5 = 1.2 Normalized cut = 1/1 + 1/9 = 1.11 Cut = 2 Ratio cut = 1/3 + 1/3 = 0.67 Normalized cut = 1/5 + 1/5 = 0.2 1 1 1 1

1 1 1 1 1

1

If graph is unweighted (or has the same edge weight)

‹#›

u Example: METIS graph partitioning

u This leads to a class of algorithms known as spectral

‹#›

‹#›

=

1

n k ik ij

‹#›

Two block- diagonal matrices

=

1

n k ik ij

Two clusters

‹#›

Two block matrices

Laplacian also has a block structure

‹#›

‹#›

‹#›

‹#›

‹#›

‹#›

‹#›

‹#›

l Which clustering method is appropriate for a

l How does one determine whether the results of

l How do you know when you have a good set of

l Is it unusual to find a cluster as compact and

l How to guard against elaborate interpretation of

‹#›

21 21

K-Means; K=3 100 2D uniform data points

‹#›

‹#›

distance as a similarity

merging clusters

elements, according to the chosen distance

‹#›

‹#›

‹#›

depicted by detailed full body portraits

‹#›

Shape alignment How to validate the clusters or groups? 127 facial landmarks

127 landmarks 1 2 3 4 5 6 7 8 9 10

Single Link clusters

Single Link

‹#›

Khmer Dance and Cultural Center

‹#›

Clustering with large weights assigned to chin and nose Example devata faces from the clusters differ largely in chin and nose, thereby reflecting the weights chosen for similarity

2D MDS Projection of the Similarity matrix

‹#›

3D MDS Projection of the Similarity matrix

‹#›

‹#›

‹#›

Î Î Î Î

2 1 2 1

, 2 1 2 1 2 1 2 1 2 2 1 1 2 1 2 1

V j V i ij j ij i V j j V i i

‹#›

2 2 1 1 1 2

i i i

Î Î Î Î

1 2 2 1

, 2 , 2 , 2

V j V i j i ij V j V i j i ij j i j i ij T

‹#›

2 1 2 2 1 1 1 2 2 1 2 2 1 1 2 1 1 2 2 1 2 1 1 2 2 1 , 1 2 2 1 , 2 1 1 2 , 2 1 2 2 1 , 2 2 1 1 2 , 2 , 2

1 2 2 1 1 2 2 1 1 2 2 1

V j V i ij V j V i ij V j V i ij V j V i ij V j V i j i ij V j V i j i ij T

Î Î Î Î Î Î Î Î Î Î Î Î

‹#›

u Trivial solution is x is a vector of all zeros u Need to look for a non-trivial solution

T x V V

2 1 , 2

1

2 1

2 1 1 2 1

Î Î = V i V i n i i T

The solution x must be orthogonal to the vector of all 1s

‹#›

T x V V

2 1 , 2

1

V i V i n i i T

1 2 2 2 1 2 1 2 1 2

2 1

Î Î =

‹#›

x

T T

ï ï î ï ï í ì Î

=

2 2 1 1 1 2

if | | | | if | | | | V v V V V v V V x

i i i

‹#›

x

‹#›

T x T x

‹#›

=

N j i j i ij j i i ij j i j i ij j i i ij j ij i j i j i ij i i i T T T T

1 , 2 , 2 , , 2 , 2

‹#›

= = = dd dd dd d j dj d j j d j j dd d d d d

22 11 22 11 22 11 1 1 2 1 1 2 1 2 22 21 1 12 11

‹#›

‹#›

u There are k eigenvalues of L which have the value 0 u The corresponding eigenvectors are:

k

2 1

where e is [1 1…1]T

‹#›

41 . 71 . 58 . 41 . 71 . 58 . 82 . 58 . 81 . 58 . 41 . 71 . 58 . 41 . 71 . 58 . V

‹#›

41 . 71 . 58 . 41 . 71 . 58 . 82 . 58 . 81 . 58 . 41 . 71 . 58 . 41 . 71 . 58 . V

‹#›

‹#›

ú ú ú ú ú ú ú ú û ù ê ê ê ê ê ê ê ê ë é

1 1 1 1 1 1 3 1 1 3 1 1 1 1 1 1 L

ú ú ú ú ú ú ú û ù ê ê ê ê ê ê ê ë é

18 . 29 . 65 . 28 . 46 . 41 . 18 . 29 . 65 . 28 . 46 . 41 . 66 . 58 . 26 . 41 . 66 . 58 . 26 . 41 . 18 . 29 . 28 . 65 . 46 . 41 . 18 . 29 . 28 . 65 . 46 . 41 . V ú ú ú ú ú ú ú ú û ù ê ê ê ê ê ê ê ê ë é = L 56 . 4 3 1 1 44 .

‹#›