CS 270 Algorithms Oliver Kullmann Binary search Lists Pointers Trees Implementing rooted trees Tutorial

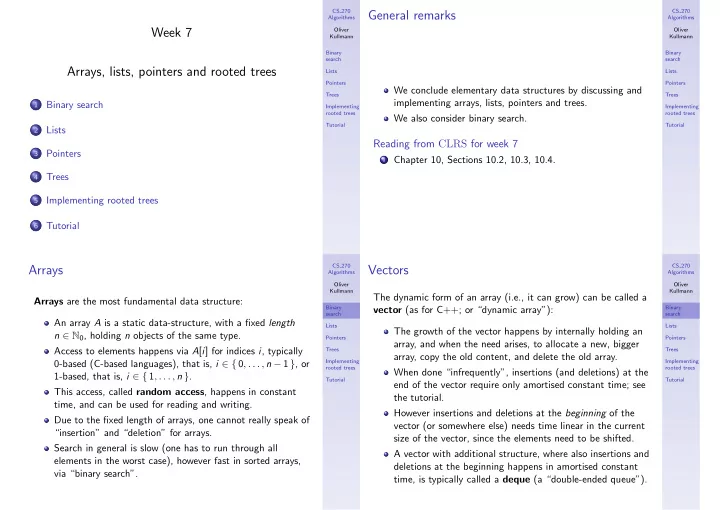

Week 7 Arrays, lists, pointers and rooted trees

1

Binary search

2

Lists

3

Pointers

4

Trees

5

Implementing rooted trees

6

Tutorial

CS 270 Algorithms Oliver Kullmann Binary search Lists Pointers Trees Implementing rooted trees Tutorial

General remarks

We conclude elementary data structures by discussing and implementing arrays, lists, pointers and trees. We also consider binary search.

Reading from CLRS for week 7

1 Chapter 10, Sections 10.2, 10.3, 10.4. CS 270 Algorithms Oliver Kullmann Binary search Lists Pointers Trees Implementing rooted trees Tutorial

Arrays

Arrays are the most fundamental data structure: An array A is a static data-structure, with a fixed length n ∈ N0, holding n objects of the same type. Access to elements happens via A[i] for indices i, typically 0-based (C-based languages), that is, i ∈ { 0, . . . , n − 1 }, or 1-based, that is, i ∈ { 1, . . . , n }. This access, called random access, happens in constant time, and can be used for reading and writing. Due to the fixed length of arrays, one cannot really speak of “insertion” and “deletion” for arrays. Search in general is slow (one has to run through all elements in the worst case), however fast in sorted arrays, via “binary search”.

CS 270 Algorithms Oliver Kullmann Binary search Lists Pointers Trees Implementing rooted trees Tutorial