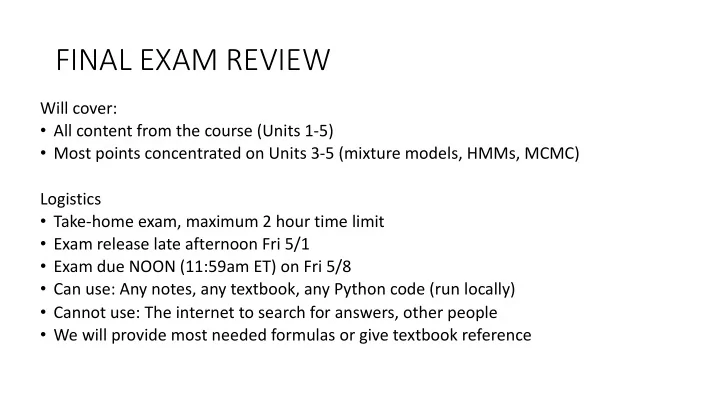

SLIDE 1 FINAL EXAM REVIEW

Will cover:

- All content from the course (Units 1-5)

- Most points concentrated on Units 3-5 (mixture models, HMMs, MCMC)

Logistics

- Take-home exam, maximum 2 hour time limit

- Exam release late afternoon Fri 5/1

- Exam due NOON (11:59am ET) on Fri 5/8

- Can use: Any notes, any textbook, any Python code (run locally)

- Cannot use: The internet to search for answers, other people

- We will provide most needed formulas or give textbook reference

SLIDE 2

Takeaway Messages

1) When uncertain about a variable, don’t condition on it, integrate it away! 2) Model performance is only as good as your fitting algorithm, initialization, and hyperparameter selection. 3) MCMC is a powerful way to estimate posterior distributions (and resulting expectations) even when the model is not analytically tractable

SLIDE 3

Takeaway 1!

When uncertain about a parameter, better to INTEGRATE AWAY than CONDITION ON OK: Using a point estimate BETTER: Integrate away ”w” via the sum rule

p(x∗| ˆ w)

<latexit sha1_base64="lKLrfhc+1Z5XvW67gMKyBwIphE0=">AB+nicbVBNT8JAEJ36ifhV9OhlIzFBD6RFEz0SvXjERD4SqGS7LBhu212tyIp/BQvHjTGq7/Em/GBXpQ8CWTvLw3k5l5fsSZ0o7zba2srq1vbGa2sts7u3v7du6gpsJYElolIQ9lw8eKciZoVTPNaSOSFAc+p3V/cDP1649UKhaKez2KqBfgnmBdRrA2UtvORYWnhzM0Rq0+1slwgk7bdt4pOjOgZeKmJA8pKm37q9UJSRxQoQnHSjVdJ9JegqVmhNJthUrGmEywD3aNFTgCovmZ0+QSdG6aBuKE0JjWbq74kEB0qNAt90Blj31aI3Ff/zmrHuXnkJE1GsqSDzRd2YIx2iaQ6owyQlmo8MwUQycysifSwx0SatrAnBXx5mdRKRfe8WLq7yJev0zgycATHUAXLqEMt1CBKhAYwjO8wps1tl6sd+tj3rpipTOH8AfW5w+0LpL+</latexit>

p(x∗|X) = Z

w

p(x∗, w|X)dw

<latexit sha1_base64="x2/tMCazDkzDw1uMp72i63SWnY=">ACDnicbVDLTsJAFJ3iC/GFunQzkZAgMaRFE92YEN24xEQeCdRmOp3ChOm0mZkKBPkCN/6KGxca49a1O/GAbpQ8CQ3OTn3tx7jxsxKpVpfhupeWV1bX0emZjc2t7J7u7V5dhLDCp4ZCFoukiSRjlpKaoYqQZCYICl5G27ua+I17IiQN+a0aRsQOUIdTn2KktORk81EBDu6K8AE2j+AFbFOunD6MCgOneAz7M9nrO9mcWTKngIvESkgOJKg62a+2F+I4IFxhqRsWak7BESimJGxpl2LEmEcA91SEtTjgIi7dH0nTHMa8WDfih0cQWn6u+JEQqkHAau7gyQ6sp5byL+57Vi5Z/bI8qjWBGOZ4v8mEVwk20KOCYMWGmiAsqL4V4i4SCudYEaHYM2/vEjq5ZJ1UirfnOYql0kcaXADkEBWOAMVMA1qIawOARPINX8GY8GS/Gu/Exa0Zycw+APj8we+c5jE</latexit>

SLIDE 4 Takeaway 2

- Initialization, remember CP3 (GMMs)

- as well as CP5 (coming!)

- Algorithm, remember the difference between

LBFGS and EM in CP3 * Hyperparameter: Remember the poor performance in CP2

Difference between purple and blue is 0.01 on log scale When normalized over 400 pixels (20x20) per image Means purple model says average validation set image is exp(0.01 * 400) = 54.5 times more likely than the blue model

SLIDE 5 Takeaway 3

- Can use MCMC to do posterior predictive

p(x∗|X) = Z

w

p(x∗, w|X)dw = Z

w

p(x∗|w)p(w|X)dw = 1 S

S

X

s=1

p(x∗|ws), ws iid ∼ p(ws|X)

<latexit sha1_base64="yD5xTIqcA75dojf35Uv58djnkRk=">ACl3icbVFda9swFJW9r9b7aNr1ZezlsrCRlBLsrtAxKCvrGH1s6dIG4sTIstyKyh+VrpcEzX9pP2Zv+zeTkzxsyS4Ijs65hyPdG5dSaPT93474OGjx082Nr2nz56/2Gpt71zpolKM91khCzWIqeZS5LyPAiUflIrTLJb8Or47bfTr71xpUeTfcFbyUZvcpEKRtFSUetn2YHpeA9+wKAL74hFDlGEyg702hvHyYLPpl4YeitqFadC1c60kVZSaozWUNoa6yOjoB5fQpO0sI01dPchvL+vaGJvRjedSNmd4tKEyKdohEjq2oRaZHVjbCxNTNRq+z1/XrAOgiVok2WdR61fYVKwKuM5Mkm1HgZ+iSNDFQome2FlealTaY3fGhTjOuR2Y+1xreWiaBtFD25Ahz9m+HoZnWsy2nRnFW72qNeT/tGF6YeREXlZIc/ZIitJGABzZIgEYozlDMLKFPCvhXYLbVzRbtKzw4hWP3yOrg6AXvewcXh+2Tz8txbJDX5A3pkIAckRNyRs5JnzBn1/nonDpf3FfuJ/ere7ZodZ2l5yX5p9yLP1A7wmo=</latexit>

SLIDE 6

You are capable of so many things now!

Given a proposed probabilistic model, you can do:

ML estimation of parameters MAP estimation of parameters EM to estimate parameters MCMC estimation of posterior Heldout likelihood computation Hyperparameter selection via CV Hyperparameter selection via evidence

SLIDE 7 Unit 1

Probabilistic Analysis Skills

- Discrete and continuous r.v.

- Sum rule and product rule

- Bayes rule (derived from above)

- Expectations

- Independence

Distributions

- Bernoulli distribution

- Beta distribution

- Gamma function

- Dirichlet distribution

Data analysis

- Beta-Bernoulli for binary data

- ML estimation of ”proba. heads"

- MAP estimation of “proba. heads"

- Estimating the posterior

- Predicting new data

- Dirichlet-Categorical for discrete data

- ML estimation of unigram probas

- MAP estimation of unigram probas

- Estimating the posterior

- Predicting new data

Optimization Skills

- Finding extrema by zeros of first derivative

- Handling Constraints via Lagrange multipliers

SLIDE 8

Example Unit 1 Question

a) True or False: Bayes Rule can be proved using the Sum Rule and Product Rules a) You’re modeling the wins/losses of your favorite sports team with a Beta-Bernoulli model.

a) You assume each game’s binary outcome (win=1/loss=0) is iid. b) You observe in preseason play: 5 wins and 3 losses c) Suggest a prior to use for the win probability d) Identify 2 or more assumptions about this model that may not be valid in the real world (with concrete reasons)

SLIDE 9

Example Unit 1 Answer

SLIDE 10 Unit 2

Probabilistic Analysis Skills

- Joints, conditionals, marginals

- Covariance matrices (pos. definite, symmetric)

- Gaussian conjugacy rules

Linear Algebra Skills

- Determinants

- Positive definite

- Invertibility

Distributions

- Univariate Gaussian distribution

- Multivariate Gaussian distribution

Data analysis

- Gaussian-Gaussian for regression

- ML estimation of weights

- MAP estimation of weights

- Estimating the posterior over weights

- Predicting new data

Optimization Skills

- Convexity and second derivatives

- Finding extrema by zeros of first derivative

- First and second order gradient descent

SLIDE 11 Example Unit 2 Question

You are doing regression with the following model

- Normal prior on the weights

- Normal likelihood:

- a. Consider the following two estimators for t_*. What’s the difference?

- b. Suggest at least 2 ways to pick a value for the hyperparameter \sigma

p(tn|xn) = NormPDF(w ∗ xn, σ2)

<latexit sha1_base64="a43bVnPThLqB7flrdowacMns+mI=">ACG3icbVBNSwMxEM36WetX1aOXYBGqSNmtgl4EURFPUsGq0NYlm6ZtaJdklm1rP0fXvwrXjwo4knw4L8x2/bg14OBx3szMwLIsENuO6nMzI6Nj4xmZnKTs/Mzs3nFhbPTRhryio0FKG+DIhgitWAQ6CXUaERkIdhF0DlL/4pw0N1Bt2I1SVpKd7klICV/FwpKoCv8B2+9dUa3sU1YLeQnIRalg+PegV8g9dTawPXDG9JclVaw34u7xbdPvBf4g1JHg1R9nPvtUZIY8kUEGMqXpuBPWEaOBUsF62FhsWEdohLVa1VBHJTD3p/9bDq1Zp4GaobSnAfX7REKkMV0Z2E5JoG1+e6n4n1eNoblT7iKYmCKDhY1Y4EhxGlQuME1oyC6lhCqub0V0zbRhIKNM2tD8H6/Jecl4reZrF0upXf2x/GkUHLaAUVkIe20R46RmVUQRTdo0f0jF6cB+fJeXeBq0jznBmCf2A8/EFs5Sesg=</latexit>

ˆ t∗ = wMAP x∗ ˜ t∗ = Et∼p(t|x∗,X)[t]

<latexit sha1_base64="6vUJKUEKlj3EmXfpJftwyboOxw=">ACO3icbVBNSxBFOzRaHT8Ws0xl0cWRUWGSPoRdgYhFyEVxd2BmHnt5et7Hng+436jLO/Lin/DmJZcIiHX3NOzu4hfBQ1FVT36vQpTKTQ6zoM1Nv5hYvLj1LQ9Mzs3v1BZXDrRSaYb7JEJqoVUs2liHkTBUreShWnUSj5aXjxvfRPL7nSIomPsZ9yP6LnsegKRtFIQeXI61HMsQjWYWUXrs7yg2+NAq6DdvzbA+F7PAn14so9sIw3y+CHMHTIoJ0FeGmjG9Aa62ANiD4QaXq1JwB4C1xR6RKRmgElXuvk7As4jEySbVu06Kfk4VCiZ5YXuZ5ilF/Sctw2NacS1nw9uL2DZKB3oJsq8GgPp/IaR1PwpNslxfv/ZK8T2vnWF3x89FnGbIYzb8qJtJwATKIqEjFGco+4ZQpoTZFViPKsrQ1G2bEtzXJ78lJ5s192t83CrWt8b1TFPpMvZJW4ZJvUyQ/SIE3CyC35SX6TR+vO+mX9sf4Oo2PWaOYTeQHr382I6qL</latexit>

SLIDE 12

Example Unit 2 Answer

SLIDE 13 Unit 3: K-Means and Mixture Models

Distributions

- Mixtures of Gaussians (GMMs)

- Mixtures in general

- Can use any likelihood (not just Gauss)

Numerical Methods logsumexp Data analysis

- K-means or GMM for a dataset

- How to pick K hyperparameter

- Why multiple inits matter

Optimization Skills

- K-means objective and algorithm

- Coordinate ascent / descent algorithms

- Optimization objectives with hidden vars

- Complete likelihood: p(x, z | \theta)

- Incomplete likelihood: p( x | \theta)

- Expectations of complete likelihood

- How to derive it

- Why it is important

- Expectation-Maximization algorithm

- Lower bound objective

- What E-step does

- What M-step does

SLIDE 14 Ex Exampl ple Uni nit 3 Que uestion

Consider two possible models for clustering 1-dim. data

- K-Means

- Gaussian mixtures

Name ways that the GMM is more flexible as a model:

- How is the GMM’s treatment of assignments more flexible?

- How is the GMM’s parameterization of a “cluster” more flexible?

Under what limit does the GMM likelihood reduce to the K-means objective?

SLIDE 15

Ex Exampl ple Uni nit 3 An Answer

SLIDE 16 Unit 4: Markov models and HMMs

Probabilistic Analysis Skills

- Markov conditional independence

- Stationary distributions

- Deriving independence properties

- Like HW4 problem 1

Linear Algebra Skills

- Eigenvectors/values for stationary

distributions Distributions

Algorithm Skills

- Forward algorithm

- Backward algorithm

- Viterbi algorithm

(all examples of dynamic programming) Optimization Skills

- EM for HMMs

- E-step

- M-step

SLIDE 17 Example Unit 4 Question

- Describe how the Viterbi algorithm is an instance of dynamic programming

Identify all the key parts:

- What is the fundamental problem being solved?

- How is the final solution built from solutions to smaller problems?

- How to describe all the solutions as a big “table” that should be filled in?

- What is the “base case” update (the simplest subproblem)?

- What is the recursive update?

SLIDE 18

Example Unit 4 Answer

SLIDE 19 Unit 5: Markov Chain Monte Carlo

Probabilistic Analysis Skills

- Inverse CDF rule for sampling

- Transformations of random variables

- Ancestral sampling

- Stationary distributions

- Remember, always a unique stationary

distribution if Markov chain is ergodic

Linear Algebra Skills

- Eigenvectors/values for stationary

distributions

MCMC algorithms

- Metropolis

- Metropolis-Hastings

- Gibbs sampling

Data Analysis

- Using MCMC to estimate a posterior

SLIDE 20 Example Unit 5 Question

- 5a. Can we use the inverse CDF rule for sampling from a univariate

Normal analytically? Can we do it numerically? If so, how?

- 5b. How would you use ancestral sampling to sample from a Bayesian

Linear regression model?

- 5c. T/F: We only need to run one MCMC chain in practice and we can

use all samples from that china

SLIDE 21

Example Unit 5 Answer