1

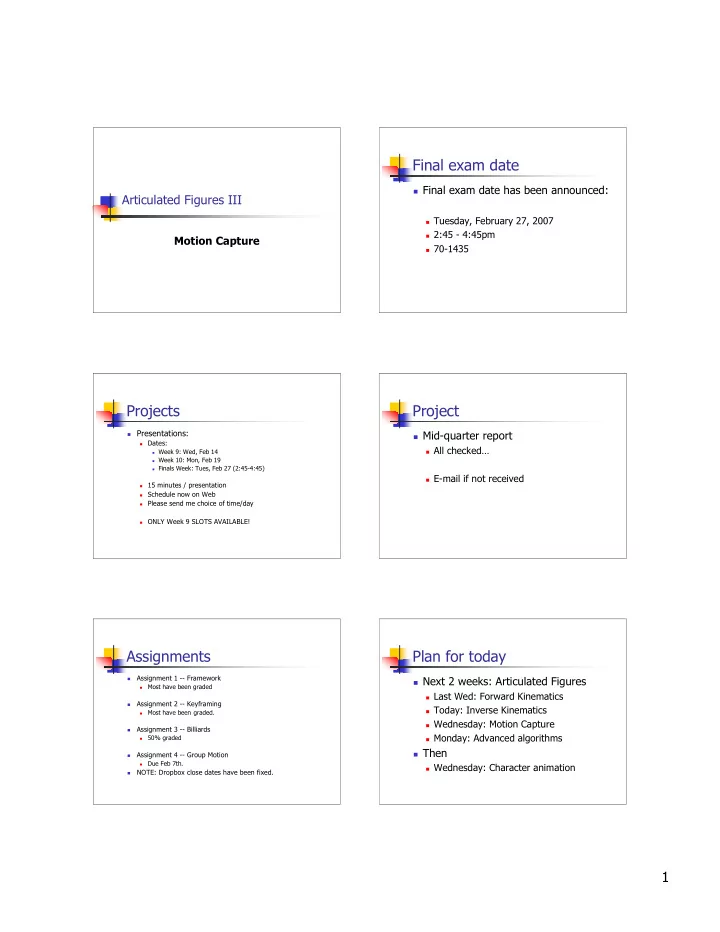

Articulated Figures III

Motion Capture

Final exam date

Final exam date has been announced:

Tuesday, February 27, 2007 2:45 - 4:45pm 70-1435

Projects

Presentations:

Dates: Week 9: Wed, Feb 14 Week 10: Mon, Feb 19 Finals Week: Tues, Feb 27 (2:45-4:45) 15 minutes / presentation Schedule now on Web Please send me choice of time/day ONLY Week 9 SLOTS AVAILABLE!

Project

Mid-quarter report

All checked… E-mail if not received

Assignments

Assignment 1 -- Framework

Most have been graded

Assignment 2 -- Keyframing

Most have been graded.

Assignment 3 -- Billiards

50% graded

Assignment 4 -- Group Motion

Due Feb 7th.

NOTE: Dropbox close dates have been fixed.

Plan for today

Next 2 weeks: Articulated Figures

Last Wed: Forward Kinematics Today: Inverse Kinematics Wednesday: Motion Capture Monday: Advanced algorithms

Then

Wednesday: Character animation