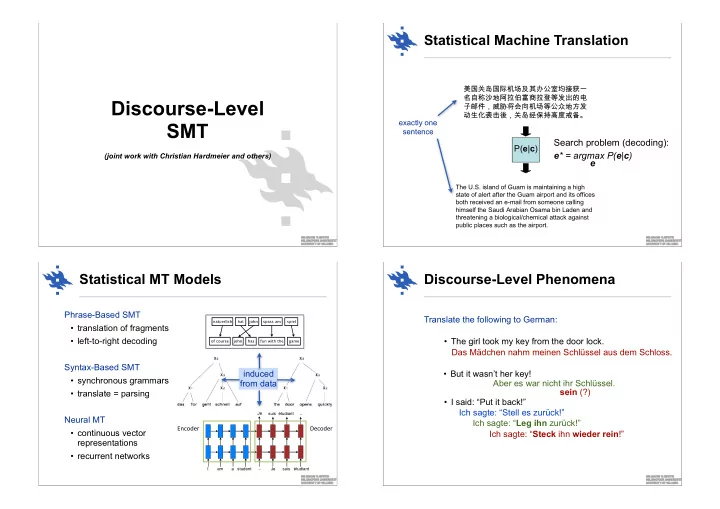

Discourse-Level SMT

(joint work with Christian Hardmeier and others)

Statistical Machine Translation

- The U.S. island of Guam is maintaining a high

state of alert after the Guam airport and its offices both received an e-mail from someone calling himself the Saudi Arabian Osama bin Laden and threatening a biological/chemical attack against public places such as the airport.

P(e|c)

Search problem (decoding): e* = argmax P(e|c) e

exactly one sentence

Statistical MT Models

Phrase-Based SMT

- translation of fragments

- left-to-right decoding

Syntax-Based SMT

- synchronous grammars

- translate = parsing

Neural MT

- continuous vector

representations

- recurrent networks

am a student _ Je suis étudiant Je suis étudiant _ I

Encoder' Decoder'

induced from data

Discourse-Level Phenomena

Translate the following to German: Ich sagte: “Stell es zurück!” Aber es war nicht ihr Schlüssel. Ich sagte: “Leg ihn zurück!” Das Mädchen nahm meinen Schlüssel aus dem Schloss. sein (?) Ich sagte: “Steck ihn wieder rein!”

- The girl took my key from the door lock.

- But it wasn’t her key!

- I said: “Put it back!”