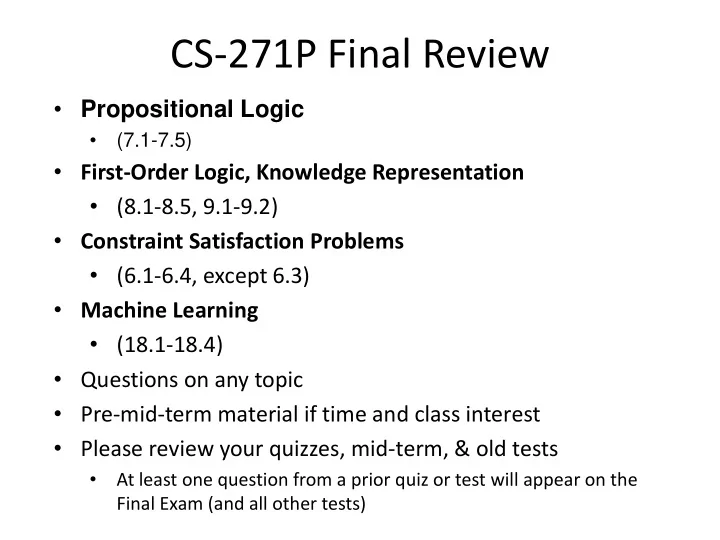

CS-271P Final Review

- Propositional Logic

- (7.1-7.5)

- First-Order Logic, Knowledge Representation

- (8.1-8.5, 9.1-9.2)

- Constraint Satisfaction Problems

- (6.1-6.4, except 6.3)

- Machine Learning

- (18.1-18.4)

- Questions on any topic

- Pre-mid-term material if time and class interest

- Please review your quizzes, mid-term, & old tests

- At least one question from a prior quiz or test will appear on the

Final Exam (and all other tests)