Computing

Christian Zeitnitz

Bergische Universität Wuppertal

- Overview

- Organization

- Resource Utilization

- Plans for 2015 and beyond

- Summary

Computing Christian Zeitnitz Bergische Universitt Wuppertal - - PowerPoint PPT Presentation

Computing Christian Zeitnitz Bergische Universitt Wuppertal Overview Organization Resource Utilization Plans for 2015 and beyond Summary Overview WLCG resources pledges in 2014 o CPU: ~190.000 CPU cores o Disk: ~180

Bergische Universität Wuppertal

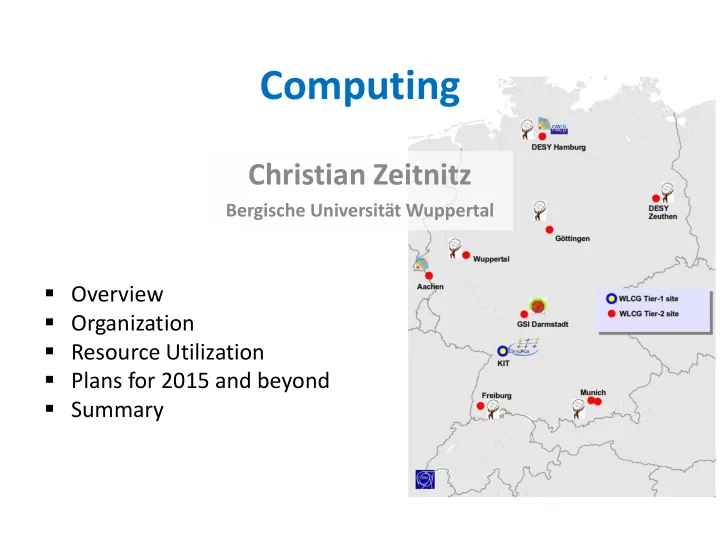

Göttingen, Munich, Wuppertal

2

distribution

funding agencies (experiment specific)

3

4

successfully and with high reliability

(up to 200%) more CPU resources than pledged

well as for simulation

the same cluster

Resource usage 7/2012-6/2013

5

6

NAF CPU usage by institutes

DESY 31% Uni-HH 30% (partially own resources) +16 German Institutes (39%)

7

100,000 Jobs Simulation Analysis 30,000 Jobs ATLAS Jan-Jul 2012

8

computing models and software efficiency

9

data!

10

institution of the Tier-center (approx. 50% of the total cost)

11

analysis resources

12

Association

resources (Tier-3, HPC, Cloud …) – No solution for analysis

13

14

BMBF, DESY, KIT, GSI, MPP

Terascale Allianz, DESY, KIT, GSI, BMBF

15

University of Hamburg owns part of the NAF resources, hence the large share

16

mpp