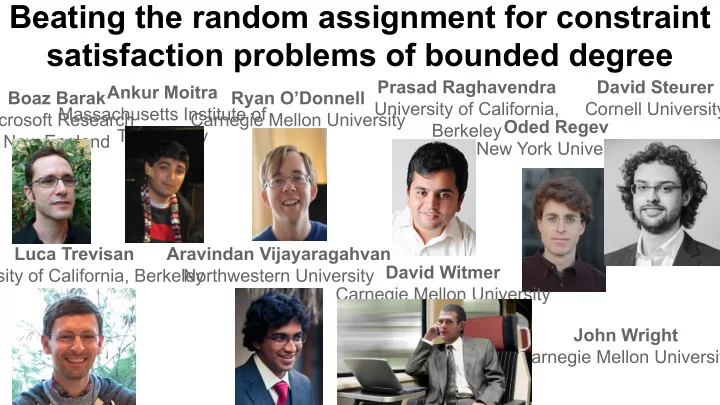

Beating the random assignment for constraint satisfaction problems of bounded degree

Boaz Barak Microsoft Research New England Ankur Moitra Massachusetts Institute of Technology Ryan O’Donnell Carnegie Mellon University Prasad Raghavendra University of California, BerkeleyOded Regev New York University David Steurer Cornell University Luca Trevisan University of California, Berkeley Aravindan Vijayaragahvan Northwestern University David Witmer Carnegie Mellon University John Wright Carnegie Mellon University