- 3. Examples

Show Correctness, Recursion and Recurrences [References to literatur at the examples]

60

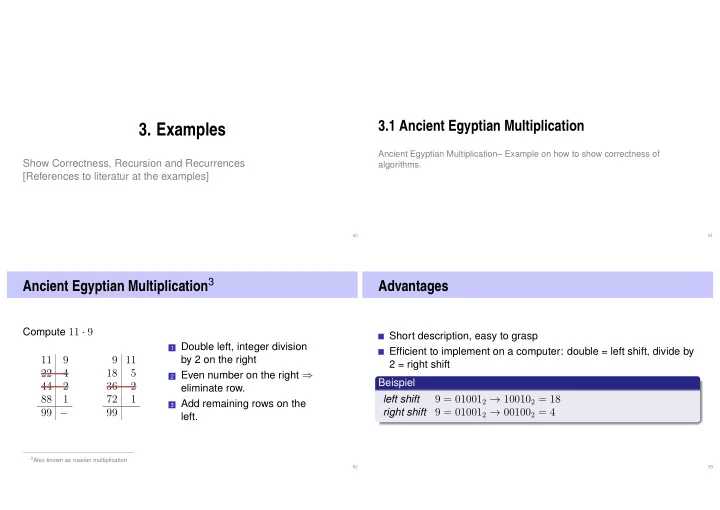

3.1 Ancient Egyptian Multiplication

Ancient Egyptian Multiplication– Example on how to show correctness of algorithms.

61

Ancient Egyptian Multiplication3

Compute 11 · 9

11 9 22 4 44 2 88 1 99 − 9 11 18 5 36 2 72 1 99

1 Double left, integer division

by 2 on the right

2 Even number on the right ⇒

eliminate row.

3 Add remaining rows on the

left.

3Also known as russian multiplication 62

Advantages

Short description, easy to grasp Efficient to implement on a computer: double = left shift, divide by 2 = right shift Beispiel left shift

9 = 010012 → 100102 = 18

right shift

9 = 010012 → 001002 = 4

63