SLIDE 1 2010-09-11 1

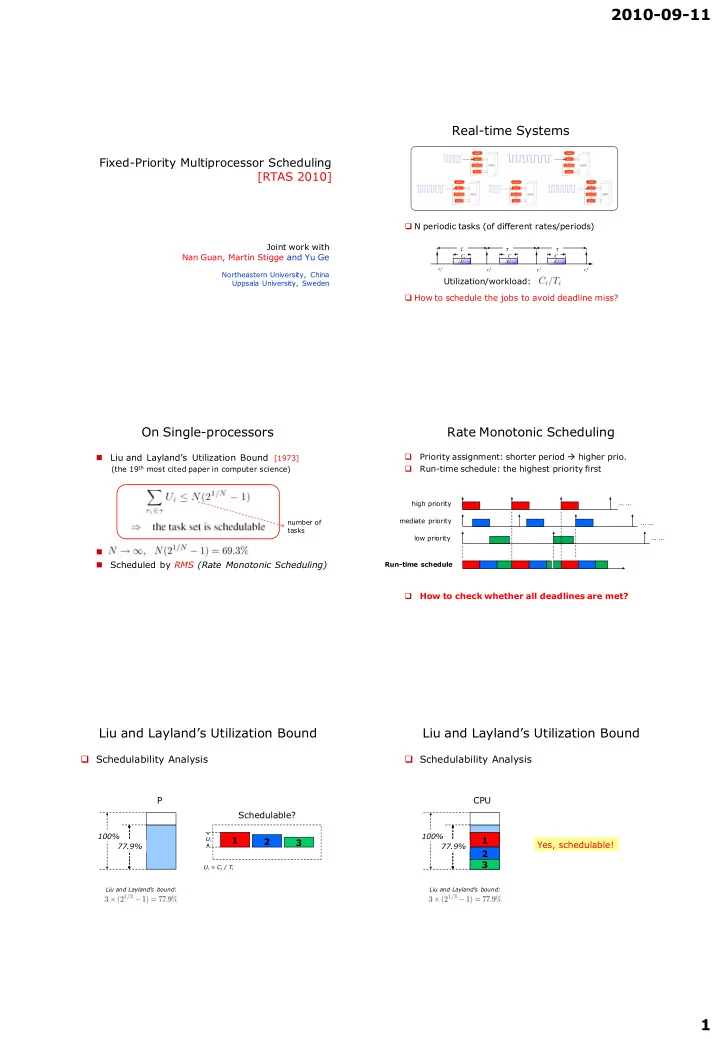

Fixed-Priority Multiprocessor Scheduling [RTAS 2010]

Joint work with Nan Guan, Martin Stigge and Yu Ge

Northeastern University, China Uppsala University, Sweden

Real-time Systems

N periodic tasks (of different rates/periods) How to schedule the jobs to avoid deadline miss?

ri1 ri2 ri3 ri4 T

i

T

i

Ji1 Ji2 Ji3 T

i

Ci C

i

C

i

Utilization/workload:

On Single-processors

Liu and Layland’s Utilization Bound [1973]

(the 19th most cited paper in computer science)

Scheduled by RMS (Rate Monotonic Scheduling)

number of tasks

Rate Monotonic Scheduling

Priority assignment: shorter period higher prio. Run-time schedule: the highest priority first How to check whether all deadlines are met?

high priority mediate priority low priority … … … … … … Run-time schedule

Liu and Layland’s Utilization Bound

Schedulability Analysis

P

100%

Schedulable?

77.9%

Ui

1 2 3

Ui = Ci / Ti Liu and Layland’s bound:

Liu and Layland’s Utilization Bound

Schedulability Analysis

CPU

100%

Yes, schedulable!

77.9%

Liu and Layland’s bound:

1 2 3

SLIDE 2 2010-09-11 2

Multiprocessor (multicore) Scheduling

Significantly more difficult:

Timing anomalies Hard to identify the worst-case scenario Bin-packing/NP-hard problems Multiple resources e.g. caches, bandwidth … …

Open Problem (since 1973)

Find a multiprocessor scheduling algorithm that can achieve Liu and Layland’s utilization bound

number of processors

?

Multiprocessor Scheduling

5 2 1 6 8 4 new task waiting queue

cpu 1 cpu 2 cpu 3 Global Scheduling cpu 1 cpu 2 cpu 3 5 1 2 8 6 3 9 7 4 cpu 1 cpu 2 cpu 3 2 5 2 1 2 2 3 6 7 4 2 3 Partitioned Scheduling Partitioned Scheduling with Task Splitting

Best Known Results (before 2010) Best Known Results (before 2010)

Lehoczky et al. CMU ECRTS 2009

Best Known Results

20 10 30 40 60 50 70 80

Multiprocessor Scheduling Global Partitioned

Fixed Priority Dynamic Priority

Task Splitting

Fixed Priority Dynamic Priority Fixed Priority Dynamic Priority

38

%

50 Liu and Layland’s Utilization Bound 50 50 65 66

[OPODIS’08] [TPDS’05] [ECRTS’03] [RTSS’04] [RTCSA’06]

Our New Result

RTAS 2010 RTSS 2010_submitted

69.3

SLIDE 3 2010-09-11 3

Multiprocessor Scheduling

5 2 1 6 8 4 new task waiting queue

cpu 1 cpu 2 cpu 3 Global Scheduling

Would fixed-priority scheduling e.g. “RMS” work?

Multiprocessor Scheduling

5 2 1 6 8 4 new task waiting queue

cpu 1 cpu 2 cpu 3 Global Scheduling

Would fixed-priority scheduling e.g. “RMS” work? Unfortunately “RMS” suffers from the Dhall’s anomali

Utilization may be “0%”

Dhall’s anomali

1 Task 1 Task 2 2 3 1 2

ε 1+ ε 1

Task 3

Dhall’s anomali

1 CPU1 CPU2 2 1 2

ε 1+ ε 1

3 3

Schedule the 3 tasks on 2 CPUs using “RMS

Deadline miss

Dhall’s anomali

… … P1 P2 PM

#1 #2 #M … … #M+1

M*ε + 1/(1+ ε)

ε/1 ε/1 ε/1 1/(ε+1)

M

U (M+1 tasks and M processors)

Multiprocessor Scheduling

cpu 1 cpu 2 cpu 3 5 1 2 8 6 3 9 7 4 Partitioned Scheduling

SLIDE 4 2010-09-11 4

Multiprocessor Scheduling

cpu 1 cpu 2 cpu 3 5 1 2 8 6 3 9 7 4 Partitioned Scheduling Resource utilization may be limited to 50%

Partitioned Scheduling

The Partitioning Problem is similar to Bin-packing Problem (NP-hard) Limited Resource Usage, 50%

necessary condition to guarantee schedulability

… … P1 P2 PM

#1 #2 #M … … #M+1

50%+ ε

Partitioned Scheduling

The Partitioning Problem is similar to Bin-packing Problem (NP-hard) Limited Resource Usage

necessary condition to guarantee schedulability

… … P1 P2 PM

#1 #2 #M … … #M+1

50%+ ε

Partitioned Scheduling

The Partitioning Problem is similar to Bin-packing Problem (NP-hard) Limited Resource Usage

necessary condition to guarantee schedulability

… … P1 P2 PM

#1 #2 #M … …

#M+1,1 50%+ ε #M+1,2

Partitioned Scheduling

The Partitioning Problem is similar to Bin-packing Problem (NP-hard) Limited Resource Usage

necessary condition to guarantee schedulability

… … P1 P2 PM

#1 #2 #M … …

#M+1,1 50%+ ε #M+1,2

Multiprocessor Scheduling

cpu 1 cpu 2 cpu 3 2 5 2 1 2 2 3 6 7 4 2 3 Partitioned Scheduling with Task Splitting

SLIDE 5 2010-09-11 5

Partitioned Scheduling

Partitioning

P1 1 P2 P3 1 3 1 2 4 5 6 7 8 9

Bin-Packing with Item Splitting

Resource can be “fully” (better) utilized

Bin1 Bin2 Bin3 12 3 11 2 4 5 7 82 6 81

Previous Algorithms

[Kato et al. IPDPS’08] [Kato et al. RTAS’09] [Lakshmanan et al. ECRTS’09]

Sort the tasks in some order e.g. utilization or priority order Select a processor, and assign as many tasks as possible

3 4 2 5 1 6 8 7 P1

Lakshmanan’s Algorithm [ECRTS’09]

Sort all tasks in decreasing order of utilization

3 4 2 5 1 6 8 7 lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 3 4 2 5 1 6 8 7 lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 3 4 2 5 1 6 7 lowest util. highest util.

SLIDE 6

2010-09-11 6

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 3 4 2 5 1 6 7 lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 3 4 2 5 1 62 7 61 lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 3 4 2 5 1 62 7 61 P2 lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 3 4 2 5 1 7 61 P2 62 lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 3 4 2 1 7 61 P2 5 62 lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 3 2 1 7 61 P2 5 62 4 lowest util. highest util.

SLIDE 7

2010-09-11 7

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 2 1 7 61 P2 5 62 4 3 lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 1 7 61 P2 5 62 4 3 21 22 lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 7 61 P2 5 62 4 3 21 P3 22 1 lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 7 61 P2 5 62 4 3 21 P3 1 22 lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 7 61 P2 5 62 4 3 21 P3 1 22 key feature: “depth-first” partitioning with decreasing utilization order lowest util. highest util.

Lakshmanan’s Algorithm [ECRTS’09]

Pick up one processor, and assign as many tasks as possible

P1 8 7 61 P2 5 62 4 3 21 P3 1 22 Utilization Bound: 65% lowest util. highest util.

SLIDE 8

2010-09-11 8 Our Algorithm

[RTAS10] “width-first” partitioning with increasing priority order

Our Algorithm

Sort all tasks in increasing priority order

7 6 5 4 3 2 1 highest priority lowest priority

Our Algorithm

Select the processor on which the assigned utilization is the lowest

7 6 5 4 3 2 1 P1 P2 P3 highest priority lowest priority

Our Algorithm

Select the processor on which the assigned utilization is the lowest

6 5 4 3 2 1 P1 P2 P3 7 highest priority lowest priority

Our Algorithm

Select the processor on which the assigned utilization is the lowest

5 4 3 2 1 P1 P2 P3 7 6 highest priority lowest priority

Our Algorithm

Select the processor on which the assigned utilization is the lowest

4 3 2 1 P1 P2 P3 7 6 5 highest priority lowest priority

SLIDE 9

2010-09-11 9

Our Algorithm

Select the processor on which the assigned utilization is the lowest

3 2 1 P1 P2 P3 7 6 5 4 highest priority lowest priority

Our Algorithm

Select the processor on which the assigned utilization is the lowest

2 1 P1 P2 P3 7 6 5 4 3 highest priority lowest priority

Our Algorithm

Select the processor on which the assigned utilization is the lowest

1 P1 P2 P3 7 6 5 4 3 21 22 highest priority lowest priority

Our Algorithm

Select the processor on which the assigned utilization is the lowest

1 P1 P2 P3 7 6 5 4 3 21 22 highest priority lowest priority

Our Algorithm

Select the processor on which the assigned utilization is the lowest

P1 P2 P3 7 6 5 4 3 21 12 11 22 highest priority lowest priority

Our Algorithm

Select the processor on which the assigned utilization is the lowest

P1 P2 P3 7 6 5 4 3 21 12 11 22

SLIDE 10

2010-09-11 10

Our Algorithm

Select the processor on which the assigned utilization is the lowest

P1 P2 P3 7 6 5 4 3 21 12 11 22 highest priority lowest priority key feature: “width-first” partitioning with increasing prio order

Comparison

P1 8 7 61 P2 5 62 4 31 P3 1 2 32 P1 P2 3 P3 11 8 4 2 7 6 5 12

Why is our algorithm better?

& increasing priority order Ours: width-first Previous: depth-first & decreasing utilization order

Comparison

P1 8 7 61 P2 5 62 4 31 P3 1 2 32 P1 P2 3 P3 11 8 4 2 7 6 5 12

Why is our algorithm better?

& increasing priority order Ours: width-first Previous: depth-first & decreasing utilization order By our algorithm split tasks generally have higher priorities

Split Task

Consider an extreme scenario: suppose each subtask has the highest priority schedulable anyway, we do not need to worry about their deadlines The difficult case is when the tail task is not on the top the key point is to ensure the tail task is schedulable 3 8 4 2 7 6 5 12 11 13

Split Task

Subtasks should execute in the correct order

τi τi1 τi2 τi3 P1 P2 P3 r d

Split Task

Subtasks get “shorter deadlines”

τi τi1 τi2 τi3 P1 P2 P3 r d ∆i = Ti - Ri1 - Ri2 Ri1 Ri2

SLIDE 11 2010-09-11 11

Split Task

Subtasks should execute in the correct order

τi τi1 τi2 τi3 P1 P2 P3 r d ∆i = Ti - Ri1 - Ri2 Ri1 Ri2

These two are on the top: no problem with schedulability

Split Task

Subtasks should execute in the correct order

τi τi1 τi2 τi3 P1 P2 P3 r d ∆i = Ti - Ri1 - Ri2 Ri1 Ri2

These two are on the top: no problem with schedulability

?

Why the tail task is schedulable?

21 22 X1 X2 Y2 Y2 + U22 <= U21

That is, the “blocking factor” for the tail task is bounded. U21 U22

The typical case: two CPUs and task 2 is split to two sub-tasks As we always select the CPU with the lowest load assigned, we know Y2 <= U21 - U22

Theorem

For a task set in which each task satisfies we have

Theorem

For a task set in which each task satisfies we have

get rid of this constraint

Problem of Heavy Tasks

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

SLIDE 12

2010-09-11 12

Problem of Heavy Tasks

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

Problem of Heavy Tasks

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

Problem of Heavy Tasks

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

Problem of Heavy Tasks

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 62 9 3 61

Problem of Heavy Tasks

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 62 9 3 61

Problem of Heavy Tasks

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 62 9 3 61

SLIDE 13

2010-09-11 13

Problem of Heavy Tasks

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 62 9 3 61

Problem of Heavy Tasks

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 62 9 3 61

Problem of Heavy Tasks

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 62 9 3 61

Problem of Heavy Tasks

P1 P2 P3 5 4 2 1 8 7 62 9 3 61

Problem of Heavy Tasks

P1 P2 P3 5 4 2 1 8 7 62 9 3 61 the heavy tasks’ tail task may have too low priority level

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

SLIDE 14

2010-09-11 14

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

SLIDE 15

2010-09-11 15

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 highest priority lowest priority 5 4 2 1 8 7 6 9 3

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 highest priority lowest priority 5 4 2 12 8 7 6 9 3 11

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 5 4 2 8 7 6 9 3 11 12

Solution for Heavy Tasks

Pre-assigning the heavy tasks (that may have low priorities)

P1 P2 P3 5 4 2 8 7 6 9 3 11 12 avoid to split heavy tasks (that may have low priorities)

Theorem

By introducing the pre-assignment mechanism, we have Liu and Layland’s utilization bound for all task sets!

SLIDE 16 2010-09-11 16

Overhead

In both previous algorithms and ours

The number of task splitting is at most M-1 task splitting -> extra “migration/preemption” Our algorithm on average has less task splitting P1 P2 P3 P1 P2 P3 Ours: width-first depth-first

Implementation

Easy!

One timer for each split task Implemented as “task migration”

i1

Ci1 P1 P2

task i

higher prio tasks

i2

until finished

as being resumed as being preempted

lower prio tasks

Further Improvement

P1

100%

Schedulable? P2 P3 3 2 1 6 5 4 9 8 7

Uisng Liu and Layland’s Utilization Bound

P1 P2 P3 Yes, schedulable by our algorithm

100%

Utilization Bound is Pessimistic

The Liu and Layland utilization bound is sufficient but not necessary many task sets are actually schedulable even if the total utilization is larger than the bound

P 1 0.69

(1, 4) (2, 12) (1, 4) (2, 8)

Exact Analysis

Exact Analysis: Response Time Analysis [Lehoczky_89] pseudo-polynomial

(1, 4) (2, 12) (1, 4) (2, 8) Rk

task k is schedulable iff Rk <= Tk

SLIDE 17 2010-09-11 17

Utilization Bound v.s. Exact Analysis

On single processors

P

100%

Utilization bound Test

for RMS

P Exact Analysis

for RMS

[Lehoczky_89] 88% 100%

On Multiprocessors

Can we do something similar on multiprocessors?

P1 P2 P3 Utilization bound Test

the algorithm introduced above

?

P1 P2 P3

100% 100%

Beyond Layland & Liu’s Bound [RTSS 2010, rejected!]

Our RTAS10 algorithm:

Increasing RMS priority order & worst-fit partitioning Utilization test to determine the maximal load for each processor The maximal load for each processor bounded by 69.3%

Improved algorithm:

Employ Response Time Analysis to determine the maximal workload on each processor more flexible behavior (more difficult to prove …) Same utilization bound for the worst case, but Much better average performance (by simulation)

I believe this is “the best algorithm” one can hope for “fixed-prioritiy multiprocessor scheduling”

Conclusions

The (multicore) Timing Problem is challenging

Difficult to guarantee Real-Time and Difficult to analyze/predict

Solutions: Partition & Isolation

Shared caches: coloring/partition Memory bus/bandwidth: TDMA, ? Processor cores: partition-based scheduling

Thanks!